Code-Shaped Arbitrage

Convert your problem into a code-shaped problem

Welcome to Infinite Curiosity, a newsletter that explores the intersection of Artificial Intelligence and Startups. Tech enthusiasts across 200 countries have been reading what I write. Subscribe to this newsletter for free to directly receive it in your inbox:

One of the biggest mistakes people make about AI is assuming LLMs are equally good at all kinds of reasoning. They’re not.

They’re much better at some forms than others. And one pattern is getting clearer with every frontier model release. LLMs are especially strong at problems that can be made to look like code.

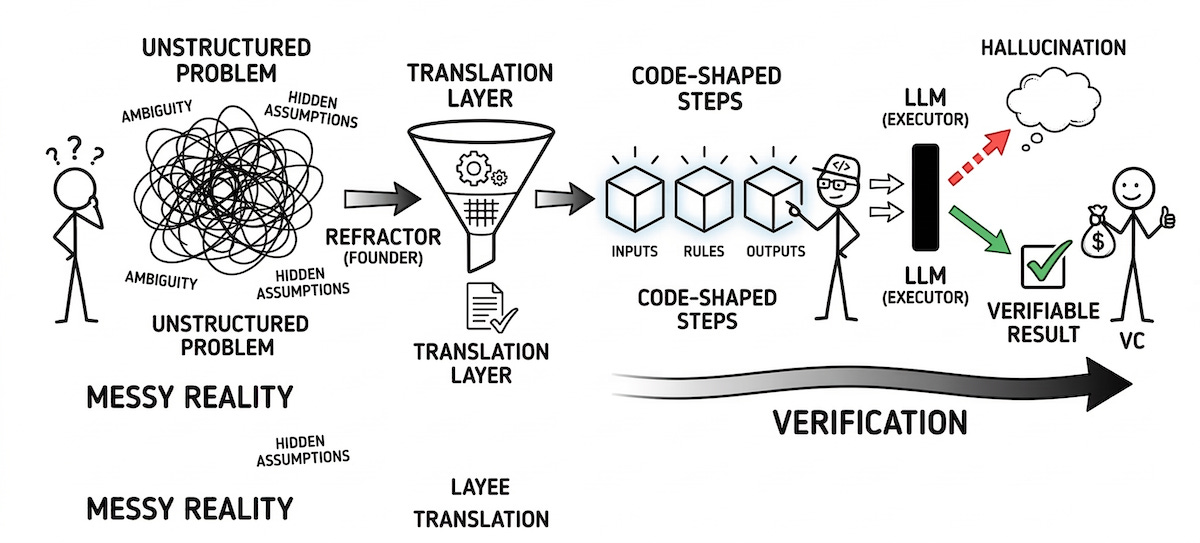

That matters because the shift is not just that models can now write software. It is that software has become a template for making messy work legible to machines. Once a process is turned into explicit steps, structured states, and constrained outputs, model performance usually improves fast. The model stops drifting through ambiguity and starts operating inside a shape it understands.

That is the arbitrage.

Why Code Works So Well

Code is one of the cleanest forms of human thought we’ve ever produced at scale. A program has inputs, transformations, edge cases, outputs, and tests. It makes logic explicit.

Natural language does the opposite. It is full of ambiguity, hidden assumptions, and implied context. Humans handle that naturally. Models do not. They can sound fluent in language, but fluency is not the same as reliability. The moment precision matters, code-like structure starts to outperform plain English.

What Frontier Labs Have Figured Out

This helps explain why frontier labs like OpenAI and Anthropic keep making large gains on coding tasks. Code gives models a better environment for reasoning.

When a model writes a function or debugs a program, it is working in a system with constraints, feedback, and testable outputs. In plain English, code gives the model rails. And once the rails are there, the model becomes far more useful.

That is why coding progress matters beyond software. It signals that the models are strongest inside structured, checkable problem spaces.

The Founder Opportunity: Refactor the World

For founders, the opportunity is not in producing enormous amount of code everyday. It doesn’t matter much because code generation is essentially free now.

So then where is the opportunity? It’s in taking a domain that still lives in messy human language and turning it into something closer to a programming environment.

“How do I convert this workflow into states, rules, transformations, and verification steps that a model can safely operate on?”

You can already see this across sectors. In legal tech, you can summarize contracts but the critical move is turning them into obligations, exceptions, and risk checks. In enterprise software, strong agent products increasingly look like workflow engines with model calls inside them (as opposed to open-ended chat interfaces).

Why Prompting Is Not Enough

This is why “just use better prompts” is not a real plan. Prompting matters, but prompts are still made of language. And language carries the same ambiguity as the original problem.

The bigger leap comes from adding structure around the model. This includes things like schemas, typed outputs, tools, evaluators, memory, and tests. In other words, making the problem more code-shaped.

That is where product quality starts to separate. Not in who writes the best prompt, but in who builds the best system around the model.

From Hallucination to Verification

Once a problem becomes more code-shaped, you move from the hallucination zone to the verification zone.

If a model gives you a paragraph, a human often has to read it and judge whether it is correct. If a model gives you structured logic or a testable transformation, you can often verify it automatically.

That changes the economics. Verification creates reliability and reliability creates enterprise value. The moment model output can be tested instead of merely reviewed, AI starts to look more like infra.

The Next Wave of AI Infra

One of the most important themes in AI infra over the next few years will be systems that convert natural-language problems into executable ones. Not necessarily code in the narrow sense of Python or Rust, but code in the broader sense of explicit structure.

That could mean domain-specific languages, planning graphs, policy engines, typed interfaces, simulation environments, or evaluation harnesses. The implementation will vary, but the principle will stay the same. Reduce ambiguity, increase structure, tighten feedback.

The big opportunity is in building systems where answers can be checked.

The Venture Capital Lens

From a venture perspective, this is where the market gets more interesting. The first wave of AI startups was dominated by wrappers around frontier models. Some created value, but many were thin.

The second wave should be more durable because it is about shaping the problem, not just accessing the model. If OpenAI or Anthropic gives everyone better intelligence, the scarce asset shifts elsewhere. It shifts to whoever owns the workflow, the structured data, the verification loop, and the domain-specific representation of the problem.

That is where defensibility starts to form.

Why Frontier Labs Won’t Capture Everything

Many people assume frontier labs will capture the entire stack. They will capture a lot, but not everything. Even if models improve dramatically, the world itself remains messy.

Most industries do not naturally arrive in a model-friendly format. Someone still has to translate reality into a machine-legible one. Someone still has to build the layer between raw workflow and model execution. That translation layer is where many strong startups will be built.

In that sense, the future of AI may look more like software engineering applied to the real world.

Where do we go from here

The frontier labs are building increasingly powerful reasoning engines. The startups with real leverage will be the ones that know how to turn fuzzy business problems into structured computational objects. The teams that understand this deeply will know how to redesign a workflow so the model can actually succeed.

The mistake is to think the opportunity is in asking better questions. The bigger opportunity is in reshaping the problem so the machine can answer it well.

That is the code-shaped arbitrage.

If you are getting value from this newsletter, consider sharing it with 1 friend who’s curious about AI: